App Stores, such as Apple’s App Store or Google Play, provide researchers the opportunity to conduct experiments with a large number of participants. If we collect data during these experiments, it may be necessary to ask for the users’ consent beforehand. The way we ask for the users’ consent can be crucial, because nowadays people are very sensitive to data collection and potential privacy violations.

We conducted a study suggesting that a simple “Yes-No” form is the best choice for researchers.

Tested Consent Forms

We (most of the credit goes to Niels Henze for conducting the study) tested four different approaches to ask for the consent to collect non-personal data. All consent forms contain the following text:

By playing this game you participate in a study that investigates the touch performance on mobile phones. While you play we measure how you touch be we DON’T transmit personalized data. By playing you actively contribute to my PhD thesis.

Checkbox Unchecked

The first tested consent form showed an unchecked check next to a text reading “Send anonymous feedback”. In order to participate in the study a user had to tick the checkbox and then press the “Okay” button.

Checkbox Checked

The second consent form is the same as the previous one, except that the checkbox is pre-checked. To participate in the study the user has to merely click the “Okay” button.

Yes/No Button

The third consent form features two buttons are provided reading “Okay” and “Nope”. To participate the user has to click “Okay”. Clicking “Nope” will end the app immediately.

Okay Button

The foorth consent form only contains a single “Okay” Button. By clicking “Okay” the user participates in the study. To avoid participation, the user has to end the app through the phone’s “home” or “return” buttons.

Study

These consent forms were integrated into a game called Poke the Rabbit! by Niels Henze. At first start, the application randomly selected one of the four consent forms. If the use accepted to participate in the study, the app transmitted the type of the consent form to a server.

Results

We collected data from 3,934 installations. The diagram below shows the conversion rate. The conversion rate was estimated by dividing the number of participants per form by 983,5 (we assume perfect randomisation, i.e. each consent form was presented in 25% of the installations).

We were surprised about the high conversion rate. Only the consent form with the unchecked checkbox yielded in a too low conversion rate.

Conclusions – use Yes/No Buttons

We suggest using the consent form with Yes-No buttons. The consent form with the checked checkbox may considered unethical, since the user may not have read the text and was not forced to consider unchecking the checkbox. The consent form with the “Okay” button may be considered unethical, too, because users may not be aware that they can avoid data collection by using the phone’s hardware buttons. The “Yes-No” form, in contrast, forces users to think about their choice and offer a clear way to avoid participating in the study.

Yes-No buttons are ethically safe and resulted in the second highest conversion rate.

Would you suggest otherwise? We are not at all saying that this is definite! Please share your opinion (comments or mail)!

More Information

This work has been published in the position paper App Stores – How to Ask Users for their Consent? The paper was presented at the ETHICS, LOGS and VIDEOTAPE Ethics in Large Scale Trials & User Generated Content Workshop. It took place at CHI ’11: ACM CHI Conference on Human Factors in Computing Systems, which was held in May 2011 in Vancouver, Canada.

Acknowledgements

The authors are grateful to the European Commission, which has co-funded the IP HaptiMap (FP7-ICT-224675) and the NoE INTERMEDIA (FP6-IST-038419).

My colleague Benjamin and I started addressing this question in 2010. We developed a consumer-grade pedestrian navigation application called

My colleague Benjamin and I started addressing this question in 2010. We developed a consumer-grade pedestrian navigation application called

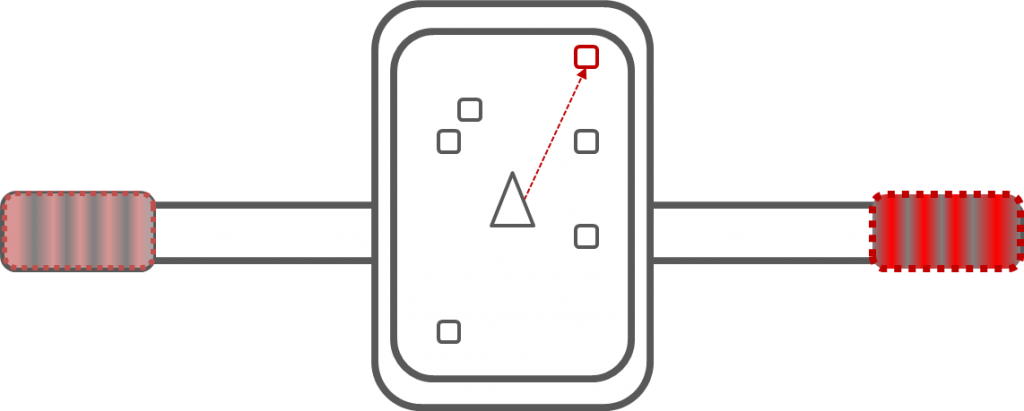

fore investigated whether the skin can be used to communicate the location of people and therefore be turned into a “friend sense”. Our solution is quite simple, as we wanted to implement it on everyday smartphones. The user can select to “follow” one of the friends. The application then calculates the relative location of this friend, such as “left-hand side”. This information is then encoded into vibration patterns. By learning the meaning of the patterns, the user can understand where the friend is without even taking the device out of the pocket.

fore investigated whether the skin can be used to communicate the location of people and therefore be turned into a “friend sense”. Our solution is quite simple, as we wanted to implement it on everyday smartphones. The user can select to “follow” one of the friends. The application then calculates the relative location of this friend, such as “left-hand side”. This information is then encoded into vibration patterns. By learning the meaning of the patterns, the user can understand where the friend is without even taking the device out of the pocket.